Scope

Starting with the report of the Dagstuhl Seminar on Language Engineering for Model-Driven Software Development, model transformation may be considered from two different perspectives, one targeting languages, the other dealing with contents. That distinction is critical and should provide the basis of process design and automation policies.

When source and target models use the same language, transformations (aka endogenous) are performed within a single semantic envelop, and contents supported by a consistent set of concepts. Providing suitable traceability mechanisms, original contents can be restored by reversing decisions taken along the development path, among them: target parameters, design patterns, functional consolidations, functional or business requirements.

Things are more complex if source and target models are built using different languages, each with its own concepts and semantics. Corresponding transformations (aka exogenous) call for a mapping that will necessarily entails some loss in translation. That difficulty can be illustrated by the difference between code generation and “reverse” engineering: given that programming languages are a subset of modeling ones, the former can be seen as endogenous; that is not the case for the latter because the semantics of design models are not limited to programming. As a consequence, backtracking (aka round-tripping) from programs to models will have to fill the conceptual gap that separate models and programs (quoting Brian Selic, how do you build a pig from its sausages?).

Sequenced vs Continuous Metamorphosis

Models are built on purpose, using universal or specific languages. Regarding software engineering, initial models are meant to capture requirements, final ones to specify software components, and models in between to manage whatever steps are deemed necessary by contexts and/or methods. When milestones can be avoided, “agile” development may take the shortcut and iterate between requirements and deliveries. Otherwise the sequence of models must take into account the different stakeholders and manage their respective inputs all along the development process. Those inputs constitute the original contents of models. Following the model driven engineering paradigm, such sequences can be regrouped into three model layers: computation independent, platform independent, and platform specific.

Having properly identified and organized the original contents meant to be added at each layer, the question is to what extent and on what conditions could model contents be “engineered”, by transformation or any other means.

Given those constraints, model transformation can be used for three kinds of purpose:

- To carry over non original contents across models, e.g data types and formats.

- To translate model contents into a different modeling language, e.g business rules.

- To map model contents across different contexts according to analysis or design decisions, e.g business entities into relational tables.

While model transformations can be used at any level, they are especially beneficial when applied selectively to architecture layers.

Meta-Models

Model transformation may appear under different guises yet most make use of meta-models, usually based upon the Meta Object Facility (MOF) proposed by the OMG.

Meta-models are models of models, in other words they single out a subset of relevant features and get rid of the leftovers. Using a meta-language, the aim is to convert a set of descriptions in one language into their equivalent in another one.

Contrary to models, which describe instances of business objects and activities, meta-models describe the modeling artifacts used by models; to take an example, Customer is a modeling type, UML Actor is a meta-modeling one.

In theory, meta-languages are supposed to be blind to domain specific semantics; in practice their effectiveness depends on the nature of transformation and the qualities of the targeted modeling languages: meta-languages will be at their best when applied to clear and compact targeted languages but they will scale poorly because of the exponential complexity of rules and the need to deal with all and every language idiosyncrasies. Moreover, performances will degrade critically with ambiguities because even limited semantics uncertainties often pollute the meaning of trustworthy neighbors generating cascades of doubtful translations. Hence the need of clearly defined purposes:

- Separation of concerns: since models can take into account more than a single concern (e.g business and technical), meta-models can be used to refine perspectives.

- Language translation: in that case the objective is to single out language constructs in order to transfer model contents into another language.

Conversely, when transformation rules are too broadly defined there is a risk of “flight for abstraction”: a model will forfeit the grasp of its original concern without catching a substitute.

Functional vs Generative Solutions

Following the parallel with linguistics, meta-models can be understood as functional or formal grammars:

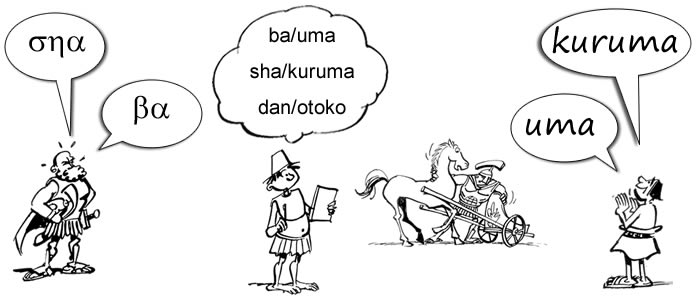

- Functional approaches take languages as the outcome of evolutionary processes bound by human speaking capabilities and driven by social necessities. Along that perspective translations are performed at word (or artifact) level.

A functional approach of transformation through metamorphosis

- Formal approaches, e.g Chomskyan generative grammars, assume that language is a structure of the human mind based upon a single inner grammar shared by all human beings. Along that understanding, translations are performed at phrase (or model) level, assuming it should be possible to extract supporting structures based upon some universal (modelling) language.

While the Meta Object Facility (MOF) belongs to the functional paradigm, a generative approach would take the problem from the other side by assuming that, given a set of concerns, all models share some common semantics which can be interpreted by a virtual machine.

Just like models describe business domains and applications, meta-models refer to model artifacts, whose syntax and semantics are described using a single metalanguage. As with language grammars, meta-models provide the basis for model transformation independently of contents semantics.

Assuming that system engineering could provide a comprehensive, consistent and non ambiguous set of concerns, any model build within such framework could then be parsed into a generic representation before being processed. In order to describe such supporting structures (“charpentes” in french), a kernel of UML (UML#) is to be circumscribed.

Extension, Construction, Transformation

Model Driven Engineering is first and foremost about process management. In other words whatever operation is performed on models should be defined by modeling constraints on one hand, organizational responsibilities on the other hand.

- Inputs are provided by stakeholders responsible for domains, use cases, architectures, platforms, and configurations.

- Constructions (analysis or design) may be partially supported by automated tools yet they entail explicit responsibilities as set by contracts.

- Transformations don’t entail responsibilities and therefore can be fully supported by automated tools providing the used parameters are explicitly documented.

Transformation vs Refactoring

Model transformation follows the arrow of time, taking expectations and commitments one step further towards application deployment, refactoring goes the other way, taking back past implementations in a bid to understand their purpose and to reinstate them. Whereas the former, by going forward, may change the contracts, the latter, by looking backward, is meant to freeze whatever was agreed in the past:

- Implementation: legacy code is wrapped and redeployed as it was.

- Source: legacy code is refactored and redeployed.

- Functionalities: applications are re-engineered.

More generally, what can be done from model to code is not necessarily possible between model layers: as each layer embodies specific information, some derived, some decided, transformation policies will have to fence off what is added at each stage, together with its associated rationale.

Transformation Profiles

While the interpretations of CIMs and PIMs may be surprisingly diverse, there is a broad consensus about what platform specific models are about, how to build them, and how to use them to implement system components. Given the combined benefits of PSMs, design patterns and code generation, it may be tempting to generalize this approach by applying automated transformation to computation independent and platform independent models. But that would rely on the implicit assumption that there is no added value between models; or put in other words, that engineering processes are pointless.

Hence the need of explicit rationales based upon models contents:

- Technical: generate code from models, possibly targeting different platforms (a).

- Development: generate views (on shared data models) or modules (e.g for standards) to be used by different projects (b).

- Enterprise: generate models (e.g canonical data model) supporting the consolidation of different business objects and activities within the same functional architecture (c).

Further readings

- Knowledge Based Model Transformation

- Conceptual Models & Abstraction Scales

- Models & Meta-models

- The Cases for Reuse

- The Economics of Reuse

- Legacy Refactoring

- Modernization & The Archaeology of Software

- EA & MDA