Preamble

Data mining explores business opportunities and competitive advantage, requirements analysis considers supporting applications. Both use models, the former’s are predictive and ephemeral, the latter’s descriptive (or prescriptive) and perennial.

As the generalization of digitized environment calls for more integration of business and software engineering processes, understanding the relationship between data mining and requirements analysis could significantly improve processes maturity and agility.

Data vs Requirements Analysis

Nowadays the success of a wide range of enterprises critically depends on two achievements:

- Mapping business models to changing environments by sorting through facts, capturing the relevant data, and processing the whole into meaningful and up to date information. That can be achieved through analysis models mapping business expectations to supporting systems.

- Putting that information into effective use through business processes and supporting systems. That is done through systems architecture and design models meant to prescribe how to build software artifacts.

Those challenges are converging: under the pressure of markets forces and technological advances most of traditional fences between business channels and IT systems are crumbling, putting the focus on the functional integration between data mining and production systems. That’s where predictive models can help by anchoring descriptive models to moving markets and by cross-feeding analysis and operations. How that can be achieved has been the bread and butter of good corporate governance for some time, but there has been less interest for the third branch, namely how data analysis (predictive models) could “inform” business requirements (descriptive models).

From Data to Information

Facts are not given but must be captured through a symbolic description of actual observations. That entails some observer set on task using a mix of conceptual and technical apparatus. Data mining and requirements analysis are practical realizations of that process:

- Data mining relies on analytic tools to extract revealing information that could be used to chart opportunities along business models.

- Requirements analysis relies on business processes and users’ practice to extract symbolic descriptions that will be used to build models of supporting applications.

If both walk the path from data to information, their objectives are different: the former’s is to improve business decisions by making sense of actual observations; the latter’s is to build system surrogates from the symbolic descriptions of actual business objects and activities.

Anchors & Structures: Plasticity of Business Entities

Perhaps paradoxically, business agility calls for terra firma because nimble trades must be rooted in corporate identity and business continuity. As a consequence, the first step of requirements analysis should be to associate individuals business objects or activities with stable and consistent identification mechanisms, and to group them with regard to that mechanism:

- External entities with natural (person) or designed identity (car).

- Symbolic entities for roles (customer) or commitments (maintenance contract).

- Actual activities (promotion campaign) and events (sale) or business logic (promotion).

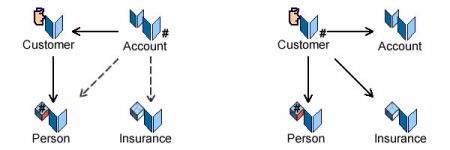

Conversely, as the aim of data analysis is to explore every business angle, individual observations are supposed to be moved across groups; yet, since the units identified by data analysis will have to be aligned with the ones described by requirements analysis, moves must also keep track of identities. That dilemma between continuity of identified structures on one side, plasticity of functional aspects on the other side, can be illustrated by banks which, in response to marketing requirements, had to shift from account (internal identification) to customer (external identification) based systems.

That challenge can be overcome by linking the identification of symbolic entities to external anchors.

Profiles & Features: Versatility of Business Opportunities

As noted above, requirements and data analysis are set on the same road but driven by different forces: the former tries to group individuals with regard to identification mechanisms before fleshing them out with relevant features; the latter tries to group individuals with given identities according to features and opportunity profiles. Yet, what could appear as collision courses may become a meeting of minds if both courses are charted with regard to variants analysis.

From the requirements perspective the primary concern is to distinguish between structural and functional variants:

- Structural variants are bound to identities, i.e set up-front for the respective life-cycle of individual business objects or transactions. As a consequence they cannot be changed without undermining business continuity. Moreover, being part and parcel of descriptors (e.g types and use cases) their change will affect engineering processes.

- Functional variants may vary during the respective life-cycle of individual business objects or transactions. As a consequence they can be changed without undermining business continuity, and changes in descriptors (e.g partitions and scenarii) can be managed without affecting engineering processes.

From the data mining perspective the objective is to improve the benefits of information systems for decision-making processes:

- Static: how to classify individuals as to reduce the uncertainty of predictions

- Dynamic: how to classify business options as to reduce the uncertainty of decisions.

Since those objectives are set for individuals, constraints on continuity and consistency can be dealt with independently of the description of symbolic surrogates.

It ensues that perspectives can be adjusted by factoring out the constraints of continuity and consistency for business objects (e.g cars), agents (e.g customer) and processes (e.g repair). Profiles for agents (a), behaviors (b), and business options (c) could then be freely explored and tailored with regard to changes in business environment and objectives.

Applying Data Analysis to Requirements

Not surprisingly data analysis techniques can be used to adjust perspectives. For that purpose a sample of individuals (business objects and operations) representing the population targeted by requirements would have to be submitted to basic mining routines. Borrowing a catalog from F. Provost & T. Fawcett:

- Classification: estimates the probability for each individual (objects or operations) to belong to a set of classes; can be used to assess the closeness of the variants (respectively power-types or execution paths) identified by requirements analysis.

- Regression: reverse classification; estimates how much of individual features valuations can be explained by the proposed classifications.

- Similarity: a shallow version of classification; can be used to assess the distance between variants and consolidate the proposed classifications.

- Clustering: a deep version of classification; can be used to distinguish between shallow and natural classifications.

- Co-occurrence: deals with behavioral variants; can be used to distinguish between functional and structural classifications.

- Profiling: reverse of co-occurrence; can be used to consolidate functional and structural classifications.

- Links prediction: can be used to define relationships.

- Data reduction: eliminate redundant individuals; can be used to consolidate requirements and refine tests scenarii.

- Causal modeling: brings together business logic (events and rules) and users decisions; should provide the backbone of tests scenarii.

Besides the direct benefits for requirements, such procedures may help to bridge the span between data and requirements analysis and significantly improve processes’ capability and maturity level.

Business Objectives & Enterprise Architecture Capabilities

Data mining being first and foremost about competitive edge, it relies on a timely and effective coupling between enterprises capabilities and business opportunities. But the dilemma between continuity and plasticity described above for business objects and processes reappears at enterprise level: how to conciliate architecture, by nature perennial, with the agility needed to make the best of changing and competitive environments ?

As architectural big bang is arguably a last resort option, answers to that question must be progressive and local: if changes are to be swift and pertinent they must be both circumscribed and leveraged to the relevant parts of architecture. Taking an (amended) leaf of the Zachman framework, its sixth column (“Why” ) could be reset as a line for business and operational objectives that would cross the original five columns instead of the architecture layers. Using a pentagonal representation of enterprise architecture, that line would be set as circling the outer range.

It is worth to note that setting objectives on a line crossing the columns of capabilities instead of a column crossing the lines of layers means that objectives are set at enterprise level and their cascading impact traced and managed through layers.

Conceptual Models & Business Contexts

But even that updated framework doesn’t take into account the fundamental changes in business environments. Once secure behind organizational and technical fences, enterprises must now navigate through open digitized business environments and markets. For business processes it means a seamless integration with supporting applications; for corporate governance it means keeping track of heterogeneous and changing business contexts and concerns while assessing the capability of organizations and systems to cope, adjust, and improve.

As long as environments were a hotchpotch of actual and symbolic artifacts the pros and cons of integration could be balanced. But the generalization of digital flows and transactions has upended the balance: there is no more room or time for latency and enterprises must bring all symbolic representations (business, organization, and systems) under a common conceptual roof:

A canonical approach would be to introduce a conceptual indexing scheme open to extensions but with its footprint defined by business processes and systems functionalities. That would ensure a better integration of processes and supporting engineering but will do nothing for the modeling gap between enterprise architecture and external contexts. That could be achieved with ontologies.

Conceptual Loop: Ontologies & Business Intelligence

As far as data mining is concerned, three kinds of operations are to be considered:

- Data understanding gives form and semantics to raw material.

- Business understanding charts business contexts and concerns in terms of objects and processes descriptions.

- Modeling consolidate data and business understanding into descriptive, predictive, and operational models.

While traditional approaches fall short when tasked with iterative modeling of unstructured data, ontologies may fare better because their explicit aim is only to describe what could exist in a domain of discourse:

- They are made of categories of things, beings, or phenomena; as such they may range from simple catalogs to philosophical doctrines.

- They are driven by cognitive (i.e non empirical) purposes, namely the validity and consistency of symbolic representations.

- They are meant to be directed at specific domains of concerns, whatever they can be: politics, religion, business, astrology, etc.

With regard to models, only the second point puts ontologies apart: contrary to models, ontologies are about understanding and are not supposed to be driven by empirical purposes. It ensues that ontologies can be understood as conceptual (aka canonical) models, used as such for business analysis and extended with purposes for systems analysis and design.

In addition to the integration with enterprise architectures, ontologies benefits for business intelligence would be twofold.

On one side, and whatever their use, ontologies could be aligned with the nature of contexts and their impact on business and enterprise governance e.g:

- Institutional: mandatory semantics sanctioned by regulatory authority, steady, changes subject to established procedures.

- Professional: agreed upon semantics between parties, steady, changes subject to established procedures.

- Corporate: enterprise defined semantics, changes subject to internal decision-making.

- Social: definedpragmatic semantics, no authority, volatile, continuous and informal changes.

- Personal: customary semantics defined by named individuals.

On the other side ontologies could be defined according to the nature of targeted items, namely terms, documents, symbolic representations, or actual objects and phenomena. That would outline four basic concerns that may or may not be combined:

- Thesaurus: ontologies covering terms and concepts.

- Document Management: ontologies covering documents with regard to topics.

- Organization and Business: ontologies pertaining to enterprise organization, objects and activities.

- Engineering: ontologies pertaining to the symbolic representation of products and services.

On a broader perspective, ontologies could be influential in spanning the gap between explicit and implicit knowledge. In a few years’ time practically unlimited access to raw data and the exponential growth in computing power have opened the door to massive sources of unexplored knowledge which is paradoxically both directly relevant yet devoid of immediate meaning:

- Relevance: mined raw data is supposed to reflect the geology and dynamics of targeted markets.

- Meaning: the main value of that knowledge rests on its implicit nature; applying existing semantics would add little to existing knowledge.

Assuming that deep learning can transmute raw base metals into knowledge gold, ontologies would be decisive in framing the understanding, assessment, and improvement of the processes.

Operational Loop: Business Intelligence & Decision-making

Once carried out separately and periodically, decision-making is to be carried out iteratively at operational, tactical, and strategic level; while each level is to be set along its own time-frames, all are to rely on data-mining, with cycles following the same pattern:

- Observation: understanding of changes in business opportunities.

- Orientation: assessment of the reliability and shelf-life of pertaining information with regard to current positions and operations.

- Decision: weighting of options with regard to enterprise capabilities and broader objectives.

- Action: carrying out of decisions within the relevant time-frame.

Given business new playground, decision-making processes have to weave together material and digitized flows, actual contexts (aka territories) and symbolic descriptions (maps), and overlapping time-frames (operational tactical, strategic). That operational loop could then be coupled with the broader one of business intelligence:

Selected Readings

- Thread: Systems, Information, Knowledge

- Models & Abstraction Scales

- Open Concepts

- Thread: Abstractions

- Business Agility & the OODA Loop

- Business Agility vs Systems Entropy

- Objects with Attitudes

- Objects & Aspects

- Knowledge Architecture

- Architecture Capabilities

- Feasibility & Capabilities

- Enterprise Governance & Knowledge

- Caminao & CMMI

- The Finger & the Moon: Fiddling with Definitions

- Events & Decision-making