Preamble

Appraising enterprises capability and maturity means navigating between the fuzzy depths of business models and the marked features of supporting systems.

Business capabilities are by nature a fleeting lot to assess, considering the innate diversity and volatility of circumstances and the fact that successes belong to exception more than rule. By contrast, the assessment of systems capabilities is much easier as they can be defined in architectural terms.

Digital transformation may help to solve the dilemma by dissolving it: given the merging of systems and organization, the key success factor for enterprises is their capacity to assess changes and opportunities and adjust their processes and architectures accordingly. On that account, performances are to depend on the dynamic alignment of enterprises representations (maps) with their business and technological environments (territories).

enterprise architecture & environments

Based on a broadly accepted architecture paradigm epitomised by the Zachman framework, and figured by the Pagoda blueprint, enterprise environments and architectures are to be defined along three levels (aka tiers or layers):

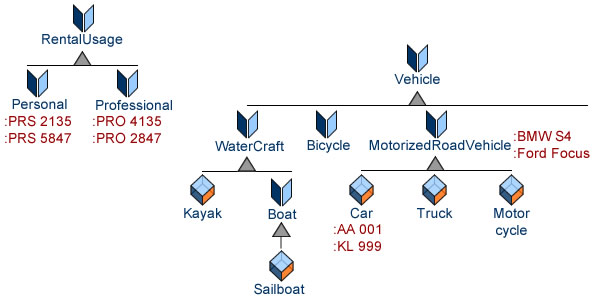

- Data level, for digital environment, operations, and platforms; described by physical and analytical models.

- Information level, for systems and engineering, described by logical and functional models.

- Knowledge level, for business objectives and organization; described by conceptual and process models.

That framework can be used to assess enterprises capacity to change independently of business specificites.

DEALING WITH CHANGES

Whereas absolute measurements are tied to valuation contexts, relative ones can be ecumenical, hence the benefit of targeting the capacity to change instead of trying to measure capacity by itself.

As far as enterprise architectures are concerned, changes can originate from business or technological environments, the former at process level, the latter at application level.

To begin with, as much change as possible should be dealt with at application level through organizational, functional, or operational adjustments, without affecting architectural assets. That is to be achieved through architectures versatility and plasticity (aka agility) .

When architectural changes are needed, their footprint can be layered in terms of Model Driven Architecture (MDA) , i.e computation independent (CIM), platform independent (PIM), and platform specific (PSM) models.

Ideally, changes should spread top-down from computation independent models to platform specific ones, with or without affecting platform independent ones. On that account, the footprint of changes rooted in technical environment should be circumscribed to platforms adaptations and charted by platform specific models.

By contrast, changes rooted in business environment could induce changes in any or all architecture layers:

- Platform specific (PSM), e.g when business logic is implemented by rules engines.

- Platform independent (PIM), e.g new business functions.

- Computation independent (CIM), e.g new business processes.

That model-based approach is to be used to define enterprises changes in terms of entropy.

Entropy & capacity to change

Change is a matter of time, especially for business, and a delicate balance is to be achieved between assessments (which improve when given time until they become redundant), and commitments (which risk missing opportunities if kept waiting for too long).

The issues can be expressed by applying the OODA (Observation, Orientation, Decision, Action) loop to system engineering:

- Changes in business and technology environments are observed at digital (e.g data mining) or conceptual level (e.g business intelligence) (a).

- Assessment deals with the reliability of observations as well as their meaning with regard to enterprise objectives, organization, and systems (b).

- Policies are updated and decisions made regarding the adjustment of objectives, resources, organization, or assets (c).

- Decisions are implemented as technical and business commitments (d).

On that basis, the capacity to change is to depend on:

- Osmosis: quality (accuracy, reliability,…), delay, and automaticity of observations with regard to changes in environments (data mining).

- Operational traceability across decisions, actions and observations (process mining, verification).

- Alignment of business and digital environments (validation).

- Consistency of architecture models (CIMs, PIMs, PSMs).

- Value chains and lean engineering: business logic (CIMs) directly embedded in software designs (PSMs).

With the benefits of digital transformation, these dimensions should be defined in terms of information processing.

osmosis & blind spots

The generalization of digital exchanges between enterprises and their environment brings back the concept of entropy, defined by cybernetics as the quantum of energy within a system that cannot be put to use. Applied to enterprises, entropy can be understood as a blind spot on environment data, arguably a critical hindrance to their capacity to move and adjust. The primary objective should therefore to minimize that blind spot, i.e to maximize the outcome of data processing .

As digital environments bring about level data playground, to get a competitive edge enterprises have to make a better sense of it; hence the importance of a distinction between data and information, the former obtained from the environment, the latter obtained through the processing of the former. On that basis, the capacity to change, defined as the opposite of entropy, is to be determined by the way data is processed into information.

Digital osmosis, i.e the exchange of digital data between enterprises operations and environments is clearly a primary factor: inbound streams can be mined as to provide comprehensive and timely snapshots, outbound streams can be weaved into business processes, enhancing the capacity to translate decisions into action.

Digital osmosis could also bolster lean engineering with the direct integration of (digital) business logic into software (e.g using rules engines), reinforcing the shortcuts between observations and decisions whenever orientation can be avoided. That would also enhance traceability between platform specific (PSMs) and operational models, as well as the monitoring of actions and the analysis of feedbacks (process mining).

Osmosis could be easily (if not accurately) estimated with the ratio between digitized flows and the whole of data flows, with measurements weighted by delays between observations and data processing.

A taxonomy of changes

Digital and business environments are not to be confused, and there is no reason to assume that their respective changes tally. As it happens, digital transformation may reinstall organizations at the nexus of changes by providing a powerful leverage on systems; and if entropy is considered, that is to be achieved through symbolic representations, aka models.

While names may vary, a distinction is generally made between changes according to their horizon, shorter for operational or tactical ones, longer for strategic ones. As so often with quantitative classifications, that understanding has been of limited use given the diversity of time-frames across industries. The digital transformation open the door to a qualitative approach, defining changes according to the nature of of their footprint:

- From the business perspective, it should restate the primacy of organization for the harnessing of IT benefits.

- From the architecture perspective, it would rank assets according to “digital modality”: symbolic (information, knowledge), tangible (e.g platforms), or a combination of both (functional architecture).

Taking the strategic perspective, changes in technical or economic factors are to affect assets and processes for a large and often undetermined number of production cycles; they must consequently be set in time-frames extending beyond managed horizons (actual chronologies depend on industries specificities). Despite regular updates, supporting models and hypothesis may lose their relevance during the intervals, with the resulting entropy hampering the assessment of opportunities and policies. Such discrepancies can be circumscribed if planned organization and computation independent models (CIMs) are systematically checked against observations.

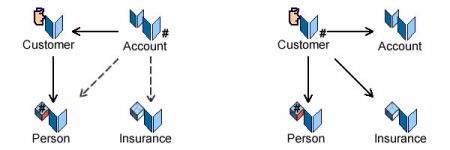

Compared to strategic ones, functional changes can be aligned with a limited number of production cycles, which irons out most of the discrepancies between maps and territories associated with strategic planning. Instead, entropy could arise from a lack of transparency and traceability between the models used to map organization and processes (CIMs) to functional (PIMs) and technical (PSMs) architectures.

Finally, operational changes can be carried out at process level, without affecting architectures. In that case entropy could be the result of a misalignment of commitments and observations granularity.

On that basis, planning and managing changes in digital and business environments should be driven by models.

ENTROPY & maturity

As defined by cybernetics, entropy can be explained by the discrepancies between environment states (aka micro-states) and their representation (macro-states). Applied to enterprises environments and architectures, it would mean territories and operations for the former, maps and policies for the latter.

On that account the maturity of an organization should be assessed with regard to its ability to manage changes within and across environments:

- Consistency of changes within environments: between territories and operations (digital environments), between maps and policies (business environments) .

- Validity of changes across environments: between maps and territories, and between policies and operations.

Maturity levels could then be set according to the scope of managed entropy:

- Digital osmosis: all exchanges with environments come with digital counterpart sorted with regard to operational data, data attached to managed information, environment data detached from managed information.

- Digital value chains: digital integration of business and engineering processes ensuring transparency and traceability along value chains.

- Organizational pivot: value chains can be redefined as to make the best of information assets.

- Knowledge based architectures: enterprise layers (platforms, systems, organization) are aligned with data, information, and knowledge layers, leveraging the benefits of machine learning across enterprise organization and systems.

As it’s safe to assume that scaling maturity levels across enterprise architectures can only be carried out progressively, progresses have to be backed up by a comprehensive and ecumenical repository of physical and symbolic resources and assets, and be supported by workflows combining iterative (continuous and business driven) and model-based (phased, architecture driven) engineering processes .

FURTHER READING

- Digital Transformation & Homeostasis

- Entropy & Homeostasis

- Knowledge-based Models Transformation

- Focus: Data vs Information

- Squaring Software To Value Chains

- Value Chains & EA

- Redeeming Conceptual Debts

- Quality Circles

- Measurements

- Functional Size measurements

- Feasibility & Capabilities

- Agile Architectures: Versatility meets Plasticity

- EA: Maps & Territories

- Projects Have to Liaise

- Caminao & CMMI