Following the recent publication of a new standard for conceptual modeling of automation systems (Object-Process Methodology (ISO/PAS 19450:2015) it may be interesting to explore how it relates to abstraction and meta-models.

Models & Meta Models

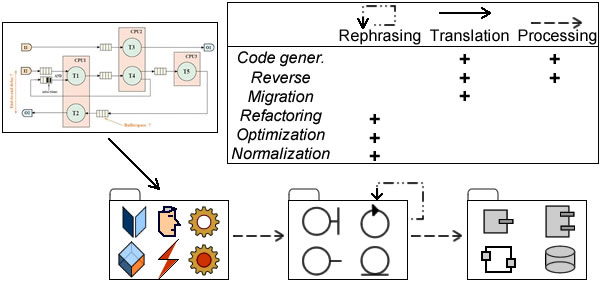

Just like models are meant to describe sets of actual instances, meta-models are meant to do the same for sets of modeling artifacts independently of their targets. Along that reasoning, conceptual modeling of automation systems could be achieved either with a single language covering all aspects, or with a meta-language dealing with different sets of models, e.g MDA’s computation independent, platform independent, and platform specific models.

Given a model based engineering framework (e.g MDA), meta-models are generally used to support downstream models transformation targeting designs and code. But when upstream conceptual models are concerned, the challenge is to tackle the knowledge-to-systems transition. For that purpose some shared modeling roof is required for the definition of the symbolic footprint of the targeted business in the automation system under consideration.

Symbolic Footprint

Given that automation systems are meant to manage symbolic objects (aka surrogates), one should expect the distinction between actual instances and their symbolic representations to be the cornerstone of corresponding modeling languages. Along that reasoning, modeling of automation systems should start with the symbolic representation of actual business footprints, namely: the sets of objects, events, and processes, the roles played by agents (aka active objects), and the description of the associated states and rules. Containers would be added for the management of collections.

Next, as illustrated by the Object/Agent hierarchy, business worlds are not flat but built from sundry structures and facets to be represented by multiple levels of descriptions. That’s where abstractions are to be introduced.

Abstraction & Variants

The purpose of abstractions is to manage variants, and as such they can be used in two ways:

- For partial descriptions of actual instances depending on targeted features. That can be achieved using composition (for structural variants) and partitions (for functional ones).

- As hierarchies of symbolic descriptions (aka types and sub-types) subsuming variants identified at instances level.

On that basis the challenge is to find the level of detail (targeted actual instances) and abstraction (symbolic footprint) that will best describe supporting systems functionalities. Such level will have to meet two conditions:

- A minimal number of comprehensive and exclusive categories covering the structural variants of the sets of instances to be uniformly, consistently, and continuously identified by both enterprise and supporting systems.

- A consistent but adjustable set of types and sub-types anchored to the core structural categories and covering the functional variants .

Climbing up and down abstraction ladders looking for right levels is arguably the critical part of conceptual modeling, but the search will greatly benefit from the distinction between models and meta-models. Assuming meta-models are meant to ignore domain specific features altogether, they introduce a qualitative gap on abstraction scales as the respective hierarchies of models and meta-models are targeting different kind of instances. The modeling of agents and roles epitomizes the benefits of that distinction.

Abstraction & Meta Models

Taking customers for example, a naive approach would use Customer as a modeling type inheriting from a super-type, e.g Party. But then, if parties are to be uniformly identified (#), that would preclude any agent for playing multiple roles, e.g customer and supplier.

A separate description of parties and roles would clearly be a better option as it would unify the identification of the former without introducing unwarranted constraints on the latter which would then be defined and identified as the realization of a relationship played by a party.

Not surprisingly, that distinction would also be congruent with the one between models and meta-model:

- Meta-models will describe generic aspects independently of domain-specific considerations, in particular organizational context (units and roles) and interactions with systems (a).

- Models will define Staff, Supplier and Customer according to the semantics of the business considered (b).

That distinction between abstraction scales can also be applied to the conceptual modeling of automation systems.

Abstraction Scales & Conceptual Models

To begin with definitions, conceptual representations could be used for all mental constructs, whereas symbolic representations would be used only for the subset earmarked for communication purposes. That would mean that, contrary to conceptual representations that can be detached of business and enterprise practicalities, symbolic representations are necessarily built on design, and should be assessed accordingly. In our case the aim of such representations would be to describe the exchanges between business processes and supporting systems.

That understanding neatly fits the conceptual modeling of automation systems whose purpose would be to consolidate generic and business specific abstraction scales, the former for symbolic representations of the exchanges between business and systems, the latter symbolic representation of business contents.

At this point it must be noted that the scales are not necessarily aligned in continuity (with meta-models’ being higher and models’ being lower) as their respective ontologies may overlap (Organizational Entity and Party) or cross (Function and Role).

Toward an Ontological Framework for Enterprise Architectures Modeling

Along an analytic perspective, ontologies are meant to determine the categories that can comprehensively and consistently denote the instances of a domain under consideration; applied to enterprise concerns that would entail:

- Thesaurus: for the whole range of terms and concepts.

- Documents: for documents with regard to topics.

- Business: for enterprise organization and business objects and activities.

- Engineering: for systems surrogates associated to enterprise organization and business objects and activities

That would open the door to a seamless integration of business intelligence, systems engineering, knowledge management, and decision-making.

Further Reading

- Open Concepts

- Open Concepts Will Make You Free

- Conceptual Thesaurus

- UML’s Semantic Master Key, Lost & Found

- Knowledge Architecture

- Modeling Paradigm

- Modeling languages: differences matter

- Caminao & Archimate

- On Pies & Skies: Abstraction in Models

- Parties

- Customers/Suppliers