Objective

Contrary to its manufacturing cousin, a long time devotee of preventive policies, software engineering is still ambivalent regarding the benefits of integrating quality management with development itself. That certainly should raise some questions, as one would expect the quality of symbolic artifacts to be much easier to manage than the one of their physical counterparts, if for no other reason than the former has to check symbolic outcomes against symbolic specifications while the latter must also to overcome the contingencies of non symbolic artifacts.

Thanks to agile approaches, lessons from manufacturing are progressively learned, with lean and just-in-time principles making tentative inroads into software engineering. Taking advantage of the homogeneity of symbolic development flows, agile methods have forsaken phased processes in favor of iterative ones, making a priority of continuous and value driven deliveries to business users. Instead of predefined sequences of dedicated tasks, products are developed through iterations regrouping definition, building, and acceptance into the same cycles. That push differentiated documentation and models on back seats and may also introduce a new paradigm by putting tests on driving ones.

From Phased to Iterative Tests Management

Traditional (aka phased) processes follow a corrective strategy: tests are performed according a Last In First Out (LIFO) framework, for components (unit tests), system (integration), and business (acceptance). As a consequence, faults in functional architecture risk being identified after components completion, and flaws in organization and business processes may not emerge before the integration of system functionalities. In other words, the faults with the more wide-ranging consequences may be the last to be detected.

Iterative approaches follow a preemptive strategy: the sooner artifacts are tested, the better. The downside is that without differentiated and phased objectives, there is a question mark on the kind of specifications against which software products are to be tested; likewise, the question is how results are to be managed across iteration cycles, especially if changing requirements are to be taken into account.

Looking for answers, one should first consider how requirements taxonomy can support tests management.

Requirements Taxonomy and Tests Management

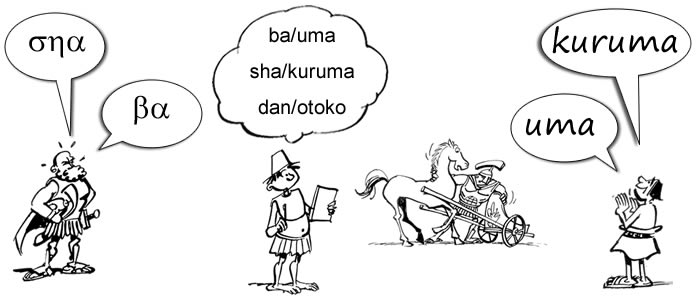

Whatever the methods or forms (users’ stories, use case, functional specifications, etc), requirements are meant to describe what is expected from systems, and as such they have two main purposes: (1) to serve as a reference for architects and engineers in software design and (2) to serve as a reference for tests and acceptance.

With regard to those purposes, phased development models have been providing clearly defined steps (e.g requirements, analysis, design, implementation) and corresponding responsibilities. But when iterative cycles are applied to progressively refined requirements, those “facilities” are no longer available. Nonetheless, since tests and acceptance are still to be performed, a requirements taxonomy may replace phased steps as a testing framework.

Taxonomies being built on purpose, one supporting iterative tests should consider two criteria, one driven by targeted contents, the other by modus operandi:

With regard to contents, requirements must be classified depending on who’s to decide: business and functional requirements are driven by users’ value and directly contribute to business experience; non functional requirements are driven by technical considerations. Overlapping concerns are usually regrouped as quality of service.

That distinction between business and architecture driven requirements is at the root of portfolio management: projects with specific business stakeholders are best developed with the agile development model, architecture driven projects set across business domains may call for phased schemes.

That requirements taxonomy can be directly used to build its testing counterpart. As developed by D. Leffingwell (see selected readings), tests should also be classified with regard to their modus operandi, the distinction being between those that can be performed continuously along development iterations and those that are only relevant once products are set within their technical or business contexts. As it happens, those requirements and tests classifications are congruent:

- Units and component tests (Q1) cover technical requirements and can be performed on development artifacts independently of their functionalities.

- Functional tests (Q2) deal with system functionalities as expressed by users (e.g with stories or use cases), independently of operational or technical considerations.

- System acceptance tests (Q3) verify that those functionalities, when performed at enterprise level, effectively support business processes.

- System qualities tests (Q4) verify that those functionalities, when performed at enterprise level, are supported by architecture capabilities.

Besides the specific use of each criterion in deciding who’s to handle tests, and when, combining criteria brings additional answers regarding automation: product acceptance should be performed manually at business level, preferably by tools at system level; tests performed along development iterations can be fully automated for units and components (black-box), but only partially for functionalities (white-box).

That tests classification can be used to distinguish between phased and iterative tests: the organization of tests targeting products and systems from business (Q3) or technology (Q4) perspectives is clearly not supposed to be affected by development models, phased or iterative, even if resources used during development may be reused. That’s not the case for the organization of the tests targeting functionalities (Q2) or components (Q1).

Iterative Tests

Contrary to tests aiming at products and systems (Q3 and Q4), those performed on development artifacts cannot be set on fixed and well-defined specifications: being managed within iteration cycles they must deal with moving targets.

Unit and components tests (Q1) are white-box operations meant to verify the implementation of functionalities; as a consequence:

- They can be performed iteratively on software increments.

- They must take into account technical requirements.

- They must be aligned on the implementation of tested functionalities.

Hence, if unit and component tests are to be performed iteratively, (1) they must be set against features and, (2) functional tests must be properly documented and available for reuse.

Functional tests (Q2) are black-box operations meant to validate system behavior with regard to users’ expectations; as a consequence:

- They can be performed iteratively on software increments.

- They don’t have to take into account technical requirements.

- They must be aligned on business requirements (e.g users’ stories or use cases).

Assuming (see previous post) a set of stories (a,b,c,d) identified by alternative paths built from features (f1…5), functional tests (Q2) are to be defined and performed for each story, and then reused to test the implementation of associated features (Q1).

At that point two questions must be answered:

- Given that stories can be changed, expanded or refined along development iterations, how to manage the association between requirements and functional tests.

- Given that backlogs can be rearranged along development cycles according to changing priorities, how to update tests, manage traceability, and prevent regression.

With model-driven approaches no longer available, one should consider a mirror alternative, namely test-driven development.

Tests Driven Development

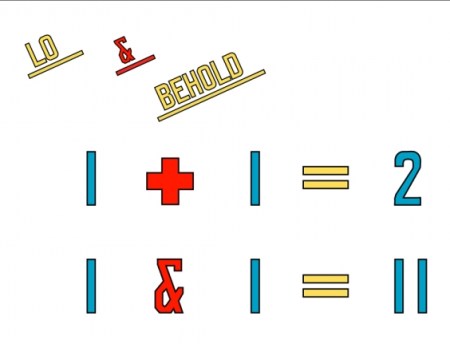

Test driven development can be seen as a mirror image of model driven development, a somewhat logical consequence considering the limited role of models in agile approaches.

The core of agile principles is to put the definition, building and acceptance of software products under shared ownership, direct collaboration, and collective responsibility:

- Shared ownership: a project team groups users and developers and its first objective is to consolidate their respective concerns.

- Direct collaboration: decisions are taken by team members, without any organizational mediation or external interference.

- Collective responsibility: decisions about stories, priorities and refinements are negotiated between team members from both sides of the business/system (or users/developers) divide.

Assuming those principles are effectively put to work, there seems to be little room for organized and persistent documentation, as users’ stories are meant to be developed, and products released, in continuity, and changes introduced as new stories.

With such lean and just-in-time processes, documentation, if any, is by nature transient, falling short as a support of test plans and results, even when problems and corrections are formulated as stories and managed through backlogs. In such circumstances, without specifications or models available as development handrails, could that be achieved by tests ?

To begin with, users’ stories have to be reconsidered. The distinction between functional tests on one hand, unit and component tests on the other hand, reflects the divide between business and technical concerns. While those concerns may be mixed in users’ stories, they are progressively set apart along iteration cycles. It means that users’ stories are, by nature, transitory, and as a consequence cannot be used to support tests management.

The case for features is different. While they cannot be fully defined up-front, features are not transient: being shared by different stories and bound to system functionalities they are supposed to provide some continuity. Likewise, notwithstanding their changing contents, users’ stories should be soundly identified by solution paths across problems space.

That can provide a stable framework supporting the management of development tests:

- Unit tests are specified from crosses between solution paths (described by stories or scenarii) and features.

- Functional tests are defined by solution paths and built from unit tests associated to the corresponding features.

- Component tests are defined by features and built by the consolidation of unit tests defined for each targeted feature according to technical constraints.

The margins support continuous and consistent identification of functional and component tests whose contents can be extended or updated through changes made to unit tests.

One step further, and tests can even be used to drive iteration cycles: once features and solution paths soundly identified, there is no need to swell backlogs with detailed stories whose shelf life will be limited. Instead, development processes would get leaner if extensions and refinements could be directly expressed as unit tests.

System Quality and Acceptance Tests

Contrary to development tests which are applied iteratively to programs, system tests are applied to released products and must take into account requirements that cannot be directly or uniquely attached to users stories, either because they cannot be expressed from a business perspective or because they are shared concerns and best described as features. Tests for those requirements will be consolidated with development ones into system quality and acceptance tests:

- System Quality Tests deal with performances and resources from the system management perspective. As such they will combine component and functional tests in operational configurations without taking into account their business contents.

- System Acceptance Tests deal with the quality of service from the business process perspective. As such they will perform functional tests in operational configurations taking into account business contents and users’ experience.

Requirements set too early and quality checks performed too late are at the root of phased processes predicaments, and that can be fixed with a two-pronged policy: a preemptive policy based upon a requirements taxonomy organizing problem spaces according concerns business value, system functionalities, components designs, platforms configuration; a corrective policy driven by the exploration of solution paths, with developments and releases driven by quality concerns.

Tests & Framework

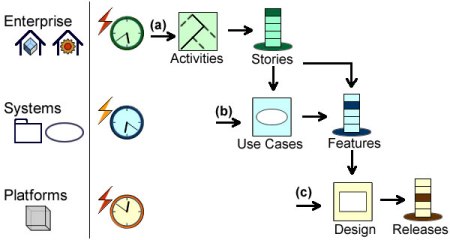

Insofar as large and complex enterprise architectures are concerned, it’s safe to assume that different development models (agile or phased) and tests policies (unit, system, acceptance, …) will have to be cohabit, and that would not be possible without an architecture framework:

- Development or unit tests are defined at platform level and applied to software components.

- Integration or system tests are defined at system level and built from tested components.

- Acceptance tests are defined at enterprise level and built from tested functionalities.

On a broader perspective such a framework is to provide the foundation of enterprise architecture workflows.

Further Reading

- Fingertips Errors & Automated Testing

- Quality Management

- Requirements Taxonomy

- Agile and Models

- Requirements and Architecture Capabilities

External Links